TL;DR: From Zscaler to Cloudflare, Microsoft to Google, cybersecurity companies are deploying AI systems with unprecedented access to organizations’ most sensitive data—including cleartext passwords, SSL certificates, private keys, SOC logs, and NOC data. While marketed as security enhancements, these AI-powered systems create new systemic risks that few organizations fully understand or control.

The New Digital Panopticon

In Jeremy Bentham’s panopticon, a single watchman could observe all prisoners without them knowing whether they were being watched. Today’s cybersecurity industry has built something far more sophisticated: AI systems that can observe, analyze, and learn from virtually every digital interaction within an organization—often without clear boundaries on what data they access or how they use it.

The Zscaler controversy we explored earlier is just the tip of the iceberg. Across the cybersecurity industry, companies are deploying AI systems that require broad access to the most sensitive organizational data to function effectively. But unlike human analysts who work with specific datasets for defined purposes, these AI systems are designed to correlate patterns across vast data lakes that often include: [

Zero Trust Maturity Evaluator | Free Assessment Tool for CISOs

Evaluate your organization’s Zero Trust security maturity across 7 critical pillars with our free assessment tool. Get personalized recommendations for your security roadmap.

ZeroTrustCISO.com

- Cleartext passwords captured during authentication processes

- SSL/TLS private keys and certificates

- Complete network traffic logs including full URLs and metadata

- SOC (Security Operations Center) logs containing threat intelligence

- NOC (Network Operations Center) data with infrastructure details

- Application logs with user behavior patterns

- Database queries and responses

- Email and communication metadata

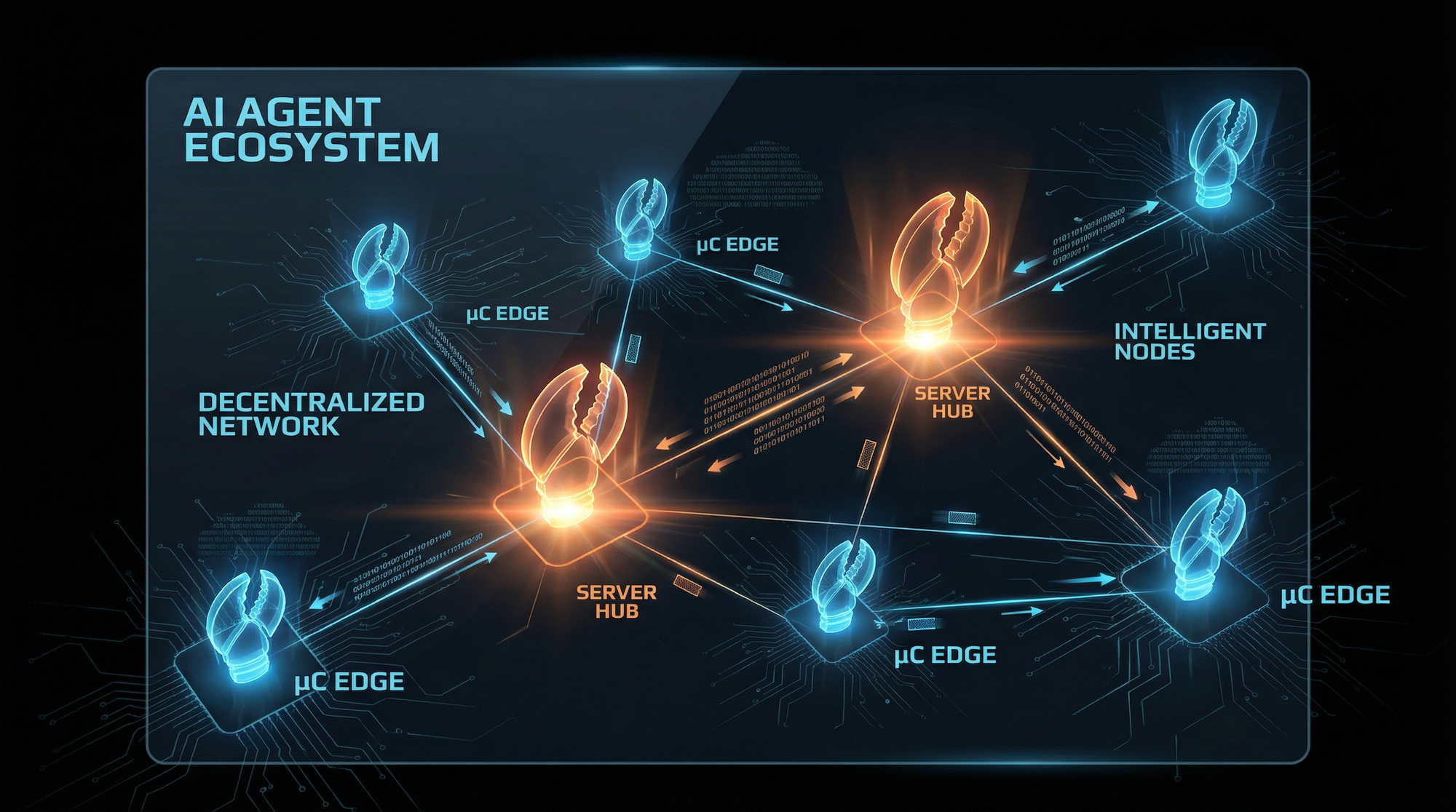

The AI-Native Security Architecture

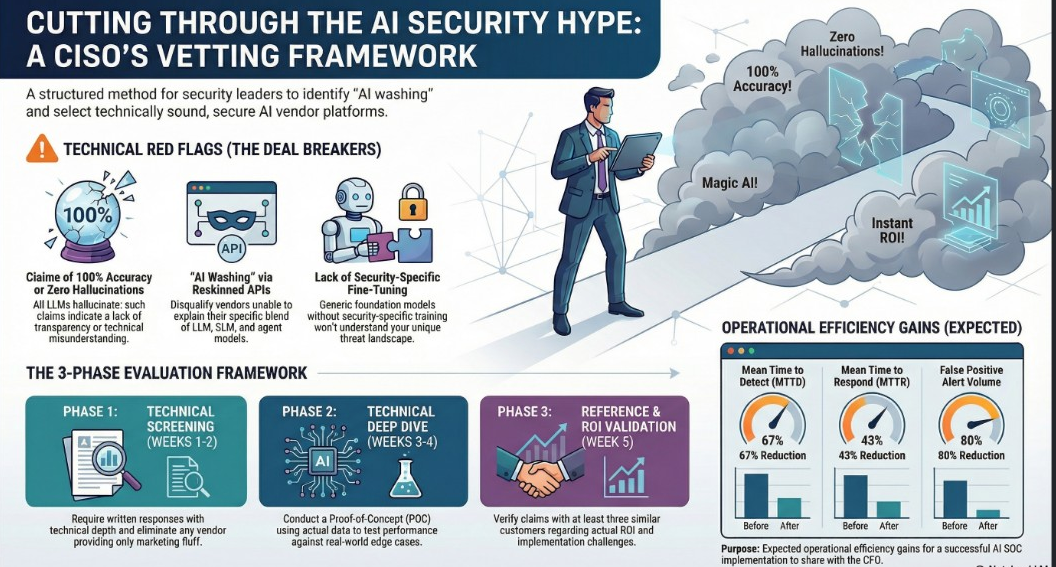

Security Operations Centers (SOCs) within cybersecurity teams are at a crossroads. They face well-documented challenges, including increasing alert volumes to talent shortages and limitations of legacy tools. At the same time, they must grapple with the capabilities of large language models (LLMs) and the implementation challenges of leveraging them effectively.

Modern cybersecurity platforms have evolved into what vendors call “AI-native” architectures. This represents a fundamental shift from traditional security tools that used AI as an add-on feature to platforms where AI is the core processing engine. [

The Geopolitical AI Brain Trust: When Foreign Investment Meets National Security in Cybersecurity’s New World Order

TL;DR: As cybersecurity companies deploy AI systems with unprecedented access to sensitive data, a complex web of foreign investment, geopolitical positioning, and executive leadership creates new national security risks. From Israeli-funded AI platforms processing your data to executives taking geopolitical stances on Ukraine-Russia while their AI “brains” have kernel-level

![]()

Security Careers HelpSecurity Careers

Microsoft’s Comprehensive AI Integration

Microsoft’s approach exemplifies this trend. Microsoft 365 Copilot uses Azure OpenAI services for processing, not OpenAI’s public services. When you enter prompts using Microsoft 365 Copilot, the information contained within your prompts, the data they retrieve, and the generated responses remain within the Microsoft 365 service boundary.

However, this “service boundary” includes access to:

- All organizational emails through Exchange integration

- Complete file systems via SharePoint and OneDrive

- Meeting transcripts and recordings from Teams

- Active Directory credentials and policies

- Application logs and user behavior data

While Microsoft states prompts, responses, and data accessed through Microsoft Graph aren’t used to train foundation LLMs, including those used by Microsoft 365 Copilot, the AI systems still require access to analyze this data in real-time to provide their services. [

When Zero Trust Meets AI Training: The Zscaler GDPR Data Processing Controversy

TL;DR: Zscaler’s CEO boasted about training AI models on “half a trillion daily transactions” from customer logs, triggering GDPR concerns. Despite corporate damage control, fundamental questions remain about data processing transparency, legal bases, and whether cybersecurity vendors can transform from processors to controllers without explicit consent. The Spark That

![]()

Compliance Hub WikiCompliance Hub

Google’s Enterprise AI Data Processing

Google Workspace with Gemini follows a similar model. Gemini accesses customer data in order to provide personalized responses, such as summarizing a document in Google Docs or analyzing data in a Google Sheet. The company assures customers that your content is not used for any other customers. Your content is not human reviewed or used for Generative AI model training outside your domain without permission.

But Google’s AI systems maintain access to:

- Gmail content and metadata including headers and attachments

- Drive files and collaboration patterns

- Calendar and meeting data

- Authentication logs and security events

- Workspace application usage patterns

Cloudflare’s Network-Level AI Processing

Cloudflare represents perhaps the most comprehensive data access scenario. We use the power of Cloudflare’s global network to detect and mitigate more than 227 billion cybersecurity threats a day on average without compromising the privacy of our customers’ data.

However, Cloudflare’s AI systems process:

- Complete HTTP/HTTPS traffic including full URLs and request bodies

- SSL/TLS handshake data and certificate information

- DNS queries and responses

- DDoS attack patterns and mitigation responses

- Content Security Policy reports revealing application architectures

- Bot detection data including fingerprinting information

Cloudflare states we may use samples of data transiting through our systems to train the ML models powering our web application firewall (WAF), raising questions about the scope of this “sample” data.

The SOC/NOC AI Revolution

The most concerning development is the deployment of AI systems directly within Security Operations Centers (SOCs) and Network Operations Centers (NOCs). These environments contain the most sensitive operational data, including: [

GDPR & ISO 27001 Compliance Assessment Tool

Comprehensive tool for security leaders to evaluate GDPR and ISO 27001 compliance and prioritize remediation efforts

GDPRISO.com

SOC Data Exposure

Security Operations Center (SOC) typically provide monitoring and detection services by collecting and analyzing MELT data, an acronym that refers to the essential types of telemetry required to detect, investigate, and respond to security incidents.

This includes:

- Raw security event logs containing failed login attempts with usernames

- Network traffic analysis revealing internal system architectures

- Threat intelligence feeds with indicators of compromise

- Incident response data including forensic artifacts

- User behavior analytics tracking all system interactions

NOC Data Sensitivity

A Network Operations Center focuses on network installation, network maintenance, network performance, and availability. Its job is to ensure that network access, servers, apps, and data are always available and that they meet or exceed organizational needs and Service Level Agreements (SLAs).

NOC AI systems access:

- Network topology and configuration data

- Infrastructure performance metrics

- Capacity planning and utilization data

- Fault detection and diagnostic logs

- Service Level Agreement compliance data

Companies like Dropzone AI showcases how AI can automate complex cybersecurity investigations and help even resource-constrained organizations focus on the security alerts that matter, but this automation requires comprehensive access to investigate “all alerts” and “all enrichment and investigation avenues.”

The Certificate Authority and PKI Risk

One of the most underappreciated risks involves AI systems’ access to Public Key Infrastructure (PKI) data. Modern cybersecurity platforms often require access to:

- Private keys for SSL/TLS certificates to perform deep packet inspection

- Certificate Signing Requests (CSRs) containing organizational details

- Certificate Revocation Lists (CRLs) and OCSP responses

- Root and intermediate CA certificates

- Key escrow and recovery data

When a private key in a public-key infrastructure (PKI) environment is lost or stolen, compromised end-entity certificates can be used to impersonate a principal that is associated with it. If AI systems have broad access to private keys for legitimate security purposes, they become high-value targets for attackers and create new systemic risks if compromised.

The Cleartext Credential Problem

Perhaps most alarming is the routine access AI systems have to cleartext credentials during various security processes:

Authentication Monitoring

Security AI systems monitor authentication events, which can include:

- Password hashes during Active Directory synchronization

- Cleartext passwords during SSL bump inspection

- API keys and tokens in application logs

- Service account credentials in configuration files

- Database connection strings with embedded passwords

Network Security Inspection

Entities exposing credentials in clear text are risky not only for the exposed entity in question, but for your entire organization. The increased risk is because unsecure traffic such as LDAP simple-bind is highly susceptible to interception by attacker-in-the-middle attacks.

AI systems designed to detect these exposures must themselves process the cleartext credentials to identify them, creating a circular security risk. [

Rate My SOC | Cybersecurity Operations Center Maturity Assessment

Evaluate your Security Operations Center maturity with our free assessment tool. Identify gaps and get actionable recommendations.

RateMySOCRateMySOC Team

The Systemic Risks

Data Aggregation and Correlation

Unlike traditional security tools that operate on specific data streams, AI systems excel at finding patterns across disparate data sources. This means:

- Cross-system correlation can reveal information that individual systems were designed to protect

- Behavioral pattern analysis can infer sensitive information from seemingly innocuous data

- Predictive modeling can anticipate user actions based on historical patterns

Third-Party AI Model Dependencies

Many cybersecurity companies rely on third-party AI models or services:

- Cloud AI APIs that may process customer data outside organizational boundaries

- Pre-trained models that may have been trained on competitor data

- Model-as-a-Service platforms with unclear data retention policies

- Open-source AI frameworks with potential supply chain risks

Vendor Lock-in and Data Portability

Organizations deploying AI-native security platforms often find:

- Proprietary data formats that make migration difficult

- AI model dependencies that require continued vendor relationships

- Historical data requirements for AI training and effectiveness

- Integration complexities that create switching costs

The Regulatory and Compliance Gaps

Current regulatory frameworks are poorly equipped to address AI-native cybersecurity platforms:

GDPR Challenges

- Purpose limitation becomes unclear when AI systems analyze data for “security purposes”

- Data minimization conflicts with AI systems’ need for comprehensive datasets

- Right to explanation is technically difficult with complex AI models

- Data portability may be impossible with AI-processed insights

Industry Compliance Standards

- PCI DSS doesn’t adequately address AI access to payment data

- HIPAA lacks specific provisions for AI processing of health information

- SOX controls may not account for AI-driven financial data analysis

- FedRAMP certification processes don’t fully evaluate AI data flows

Case Studies in Risk Materialization

The CrowdStrike Paradigm

CrowdStrike’s platform demonstrates both the power and risks of AI-native security. CrowdStrike Falcon: Cybersecurity’s AI-native platform processes vast amounts of endpoint data. UAL records user access to various services running on a Windows Server. The access is logged to databases on disk that contain information on the type of service accessed, the user account that performed the access and the source IP address from which the access occurred.

This comprehensive logging enables powerful security analytics but also creates a detailed surveillance infrastructure that, if compromised, provides attackers with complete organizational insight.

The Certificate Authority Ecosystem

Certificate Authorities prevent falsified entities and manage the life cycle of any given number of digital certificates within the system. Much like the state government issuing you a license, certificate authorities vet the organizations seeking certificates and issue one based on their findings.

When AI systems have broad access to CA operations, they can potentially:

- Issue unauthorized certificates if compromised

- Revoke legitimate certificates causing service outages

- Extract private keys for malicious use

- Modify certificate transparency logs to hide activities

Building Safer AI-Security Integration

Data Access Minimization

Organizations should implement strict boundaries on AI data access:

- Purpose-specific datasets rather than comprehensive data lakes

- Time-bounded access with automatic data purging

- Role-based AI permissions limiting system capabilities

- Air-gapped processing for the most sensitive operations

Transparency and Auditability

Security AI systems should provide:

- Detailed logging of all data access and processing

- Explainable AI outputs that can be audited and reviewed

- Data lineage tracking showing how insights were derived

- Regular access reviews and permission audits [

AI RMF to ISO 42001 Crosswalk Tool

Navigate between NIST AI Risk Management Framework and ISO/IEC 42001 standards with our interactive crosswalk tool.

![]()

AI Risk Management Framework to ISO 42001 MappingAI Risk Assessment

](https://compliance.airiskassess.com/)

Vendor Due Diligence

Organizations must evaluate:

- Data processing locations and jurisdictions

- Model training practices and data sources

- Third-party dependencies and supply chain risks

- Data retention and deletion policies

- Incident response and breach notification procedures

Regulatory Evolution

Policymakers and standards bodies must address:

- AI-specific data protection requirements beyond current frameworks

- Cybersecurity AI certification and assessment standards

- Cross-border data flow rules for AI processing

- Mandatory transparency reporting for AI data usage

The Path Forward

The cybersecurity industry’s embrace of AI represents both tremendous opportunity and significant risk. While AI-powered security tools can detect threats that would otherwise go unnoticed, they also create new attack surfaces and systemic risks that the industry is only beginning to understand.

Organizations must move beyond vendor marketing claims to thoroughly assess:

- What data their security AI systems actually access

- How that data is processed, stored, and potentially shared

- Who has access to AI insights and derived intelligence

- What controls exist to prevent misuse or unauthorized access

- How to detect when AI systems are compromised or behaving unexpectedly

The Zscaler controversy should serve as a wake-up call: if companies can’t clearly explain their AI data usage when directly challenged under GDPR, how many other cybersecurity vendors are operating AI systems with unclear or undisclosed data access?

🎧 Related Podcast Episode

Conclusion: The Security Paradox

We face a fundamental paradox: the very AI systems designed to protect our most sensitive data require unprecedented access to that data to function effectively. This creates a concentration of risk that, if exploited, could be far more damaging than the threats these systems are designed to prevent.

The path forward requires industry-wide commitment to:

- Transparent data usage policies and practices

- Minimalist AI architectures that limit unnecessary data access

- Robust oversight and auditing mechanisms

- Regulatory frameworks that address AI-specific risks

- Customer control over AI data processing decisions

As organizations increasingly rely on AI-powered cybersecurity solutions, they must demand the same level of scrutiny and control over these systems that they would expect for any critical security infrastructure. The alternative—blind trust in black box AI systems with comprehensive data access—represents an unacceptable security risk that could undermine the very protection these systems promise to provide.

The cybersecurity industry must prove that its AI systems can be both powerful and trustworthy. Until then, organizations should proceed with extreme caution when granting AI systems access to their most sensitive data—regardless of the security benefits promised in return.

This analysis is based on publicly available information and vendor documentation. Organizations should conduct their own thorough security assessments before deploying AI-powered cybersecurity solutions and should regularly audit their data access and processing practices.