🎧 Related Podcast Episode

Executive Summary

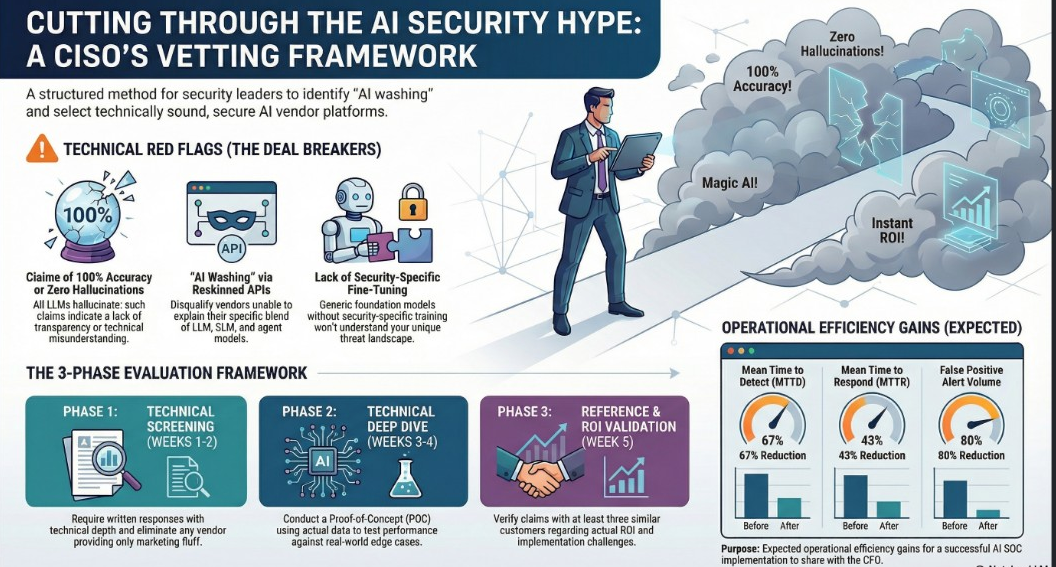

As of late 2025, the cybersecurity market is experiencing an “AI washing epidemic,” where legacy products are rebranded with artificial intelligence labels despite having minimal actual intelligence. For Chief Information Security Officers (CISOs), the stakes of vendor selection have moved beyond simple procurement; poor choices now risk creating new attack surfaces, generating compliance violations (GDPR/CCPA), and eroding team morale.

This briefing outlines a rigorous framework for evaluating AI Security Operations Center (SOC) platforms. Critical takeaways include:

- Absolute Red Flags: Any vendor claiming “zero hallucinations” or 100% accuracy should be disqualified immediately, as all Large Language Models (LLMs) hallucinate.

- Operational Impact: Proven AI integrations can deliver a 67% reduction in Mean Time to Detect (MTTD) and an 80% reduction in false positive volume.

- Architectural Rigor: Success depends on “augmentation, not replacement.” AI should handle Tier-1 triage while analysts evolve into “force multipliers” focusing on threat hunting.

- Data Sovereignty: Data residency is a foundational requirement. CISOs must demand contractual guarantees that model inference occurs in-region to avoid regulatory failure. [

CISO Marketplace | Cybersecurity Services, Deals & Resources for Security Leaders

The premier marketplace for CISOs and security professionals. Find penetration testing, compliance assessments, vCISO services, security tools, and exclusive deals from vetted cybersecurity vendors.

Cybersecurity Services, Deals & Resources for Security Leaders](https://cisomarketplace.com/blog/building-security-vendor-evaluation-framework-ciso-guide)

I. The Current AI Security Landscape

In the 2025-2026 period, CISOs face a confluence of budget constraints, a widening skills gap, and board-level pressure to demonstrate ROI. Vendors often promise “autonomous” capabilities to capitalize on these pressures. However, integrating these technologies without deep technical vetting creates significant architectural, security, and liability risks.

The Risks of “AI Washing”

- New Attack Surfaces: Poorly secured AI systems introduce novel vectors like prompt injection.

- Liability Exposure: Organizations may be held liable for negligent outcomes or faulty security decisions driven by AI hallucinations.

- Operational Silos: Underestimated integration complexity can lead to standalone tools that disrupt existing workflows rather than enhancing them.

II. Critical Technical Evaluation Framework

Organizations must shift from “promises to proof” by asking deep technical questions that reveal a vendor’s actual expertise versus a “reskinned API integration.”

The 10 Critical Questions for AI SOC Vendors

|

Category |

Critical Question |

What a “Good” Answer Looks Like | |

Architecture |

- Why this blend of LLM, SLM, and Agents? |

Explanation of using Small Language Models (SLMs) for sub-100ms triage and LLMs for complex reasoning. | |

Training |

- How did you train/fine-tune the models? |

Evidence of fine-tuning on security-specific data (e.g., 500,000+ real incidents) rather than generic internet data. | |

Hardening |

- How do you prevent prompt injection? |

Multi-layer prompt validation, input sanitization, and execution sandboxing. | |

Reliability |

- How do you prevent hallucinations? |

Use of Retrieval-Augmented Generation (RAG) and ensemble voting for critical decisions. | |

Isolation |

- How do you prevent data exfiltration? |

Dedicated model instances with cryptographic isolation and customer-managed keys. | |

Compliance |

- How is data residency guaranteed? |

Guarantee that all model inference happens in-region (e.g., within the EU for GDPR). | |

Quality |

- How is quality managed as models change? |

Maintaining a “golden test set” of scenarios and offering a 30-day rollback window for updates. | |

Efficiency |

- What are your specific SOC metrics? |

Quantifiable data: 67% reduction in MTTD; 43% reduction in MTTR. | |

Integration |

- How difficult is the implementation? |

Realistic timelines (2–4 weeks) with native SIEM/SOAR/Ticketing integrations. | |

Human Impact |

- What is the plan for the current team? |

A focus on augmentation; AI handles Tier-1 triage to free humans for threat hunting. |

[

CISO Marketplace | Cybersecurity Services, Deals & Resources for Security Leaders

The premier marketplace for CISOs and security professionals. Find penetration testing, compliance assessments, vCISO services, security tools, and exclusive deals from vetted cybersecurity vendors.

Cybersecurity Services, Deals & Resources for Security Leaders](https://cisomarketplace.com/blog/cisos-guide-ai-security-vendor-evaluation)

III. Security and Compliance Imperatives

Data Residency and Sovereignty

Data residency is a primary CISO concern because AI SOC platforms handle highly sensitive assets, including threat intelligence and vulnerability data.

- Regulatory Failure: Routing data through various regions for processing can lead to immediate GDPR or CCPA violations.

- In-Region Inference: Vendors must guarantee that the actual processing (inference) occurs within the required sovereign territory.

- Vetting Questions: CISOs should ask if subcontractors meet the same residency requirements and how data is handled during disaster recovery.

Architectural Risks

- Model Drift: AI systems can become liabilities if performance degrades as the environment evolves. Vendors should provide continuous quality assurance and model versioning.

- Prompt Injection: Attackers may embed malicious instructions in log files to trick the AI into leaking data or ignoring security policies.

- Grounding and Hallucinations: Hallucinations in a security context can lead to disabling critical controls. Grounding mechanisms like RAG are essential to ensure the AI remains anchored in verified facts.

IV. ROI and Operational Transformation

Financial Justification (The CFO Perspective)

To justify investment, CISOs must calculate the Total Cost of Ownership (TCO) against measurable benefits:

- Reduced Analyst Hours: Savings from automating Tier-1 alerts.

- Breach Cost Reduction: Financial impact of faster incident response (reduced MTTD/MTTR).

- Retention Savings: Reduced recruitment costs by mitigating employee burnout and “alert fatigue.”

- Tool Consolidation: Savings realized by eliminating redundant security tools.

Team Transformation: Augmentation, Not Replacement

The successful integration of AI requires a dedicated change management strategy.

- Role Evolution: The goal is to move analysts away from repetitive tasks and toward high-value activities like strategic diagnostics and complex repairs.

- Analyst Trust: Teams must have the ability to override AI decisions. Feedback loops should be used to improve the system over time.

- New Skill Sets: SOC teams will need to develop skills in AI/ML fundamentals, prompt engineering for security, and adversarial AI testing.

[

CISO Budget Builder

Build a defensible security budget tied to risk reduction

CISO Budget BuilderCISO Budget Builder Team

](https://cisobudgetbuilder.com/)

V. Vendor Evaluation Process and Red Flags

A multi-phase evaluation methodology is required to separate genuine innovation from marketing hype.

Phase-Based Evaluation

- Initial Screening (Weeks 1-2): Require written, technically dense responses to the 10 critical questions. Eliminate vendors providing “marketing fluff.”

- Technical Deep Dive (Weeks 3-4): Conduct a Proof of Concept (POC) using actual (or anonymized) organizational data. Test edge cases, not just curated demos.

- Reference Checks (Week 5): Speak with three or more existing customers to validate actual ROI and identify implementation challenges.

- Business Terms (Weeks 6-7): Negotiate performance-based pricing and ensure clear data portability/exit strategies.

Immediate Disqualifiers (Red Flags)

|

Technical Red Flags |

Business & Security Red Flags | |

Claims 100% accuracy or zero hallucinations. |

Refuses a Proof of Concept (POC) or pilot. | |

Cannot explain AI architecture in technical terms. |

Unwilling to commit to contractual SLAs or performance metrics. | |

Uses generic foundation models without customization. |

Failure to specify data residency locations. | |

No prompt injection defenses. |

Shares models/infrastructure across customers without isolation. | |

Unwilling to discuss failure cases or model drift. |

Dismissive of the human team’s value. |

Objective Scoring Criteria

CISOs should utilize a weighted scorecard to evaluate vendors, requiring a minimum passing score (e.g., 3.5/5.0) across categories such as Technical Architecture (20%), Security & Compliance (25%), and Performance & Quality (20%). No vendor should be selected if they score below 3.0 in any single critical security category.