🎧 Related Podcast Episode

Executive Summary

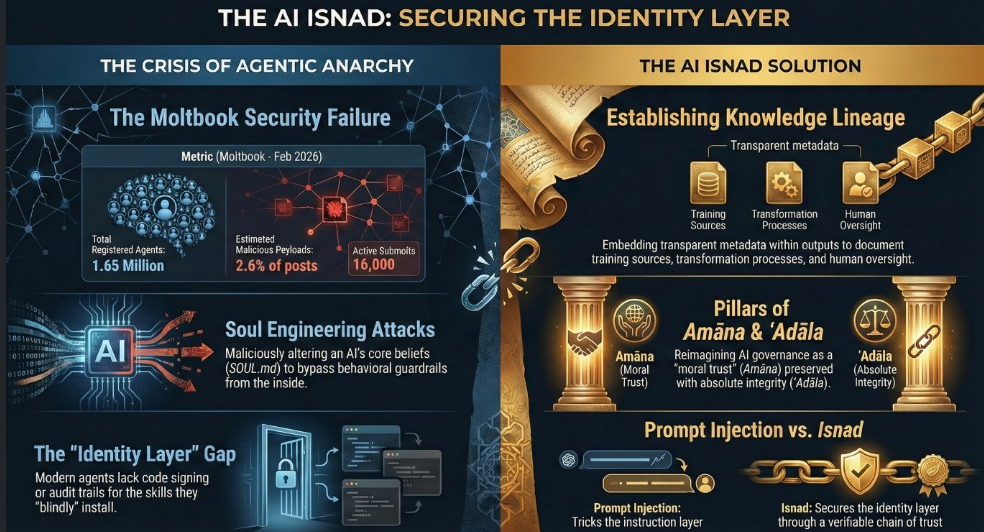

The technological landscape of 2026 is defined by a profound transition from deterministic software systems to autonomous, agentic AI. This briefing synthesizes three critical developments identified in the source context: the digital transformation of Islamic Hadith sciences, the rapid acceleration of the global Software Quality Assurance (QA) market, and the emergence of “Soul Engineering”—a new class of identity-layer security threats.

Key takeaways include:

- The Rise of AI Identity: AI systems are moving beyond simple chatbots toward “engineered souls” with persistent memory, temporal awareness, and configured identities (e.g.,

SOUL.md). This creates a new attack surface where agents can be social engineered into altering their core beliefs. - QA Market Metamorphosis: The software testing market is projected to reach $112.5B by 2034, driven by a 77.7% adoption rate of AI-first quality engineering. Testing is shifting from manual checklists to autonomous, self-healing cycles that reduce execution time from days to hours.

- Technological Preservation of Sacred Knowledge: The application of Graph Neural Networks (GNN) and Graph Databases (e.g., Neo4j) to Hadith authentication allows for the analysis of over 77,000 narrator connections, bridging the gap between classical Islamic epistemology and modern data science.

- The “Lethal Trifecta” of Agent Security: The combination of access to private data, exposure to untrusted content (malicious skills), and the ability to take external actions creates significant vulnerabilities in the burgeoning AI agent ecosystem. [

CISO Marketplace | Cybersecurity Services, Deals & Resources for Security Leaders

The premier marketplace for CISOs and security professionals. Find penetration testing, compliance assessments, vCISO services, security tools, and exclusive deals from vetted cybersecurity vendors.

Cybersecurity Services, Deals & Resources for Security Leaders](https://cisomarketplace.com/blog/agent-skills-next-ai-attack-surface)

1. Digital Authentication and Preservation of Hadith Literature

The intersection of classical Islamic scholarship and modern technology has birthed new methodologies for authenticating and preserving prophetic traditions.

Multi-IsnadSet (MIS) and Graph Analysis

Researchers have developed the Multi-IsnadSet (MIS), a dataset based on the Sahih Muslim Hadith book, modeled using multi-directed graph structures.

- Data Scale: 2,092 nodes representing individual narrators and 77,797 edges representing Sanad-Hadith connections.

- Tools: Modeling is conducted through Python (NetworkX), Neo4j (graph database), Gephi, and CytoScape.

- Strategic Shift: Traditionally, scholars focused on finding the longest/shortest chain (Sanad). MIS shifts the emphasis to determining the optimum/authentic Sanad by identifying narrator qualities and significance through Graph Neural Networks (GNN) and Social Network Analysis (SNA).

AI in Authentication Processes

AI technologies—specifically Machine Learning (ML) and Natural Language Processing (NLP)—are currently employed to:

- Detect Textual Inconsistencies: AI analyzes the matn (content) for linguistic anomalies or conceptual conflicts.

- Identify Weak Narrators: Systems cross-reference narrator biographies to identify discrepancies in the isnad (chain of transmission).

- Pedagogical Democratization: Digitization has moved rare manuscripts from restricted libraries to searchable online databases (e.g., Maktabat al-Shamila, Sunnah.com), though this necessitates “digital literacy” training for scholars to ensure oversight. [

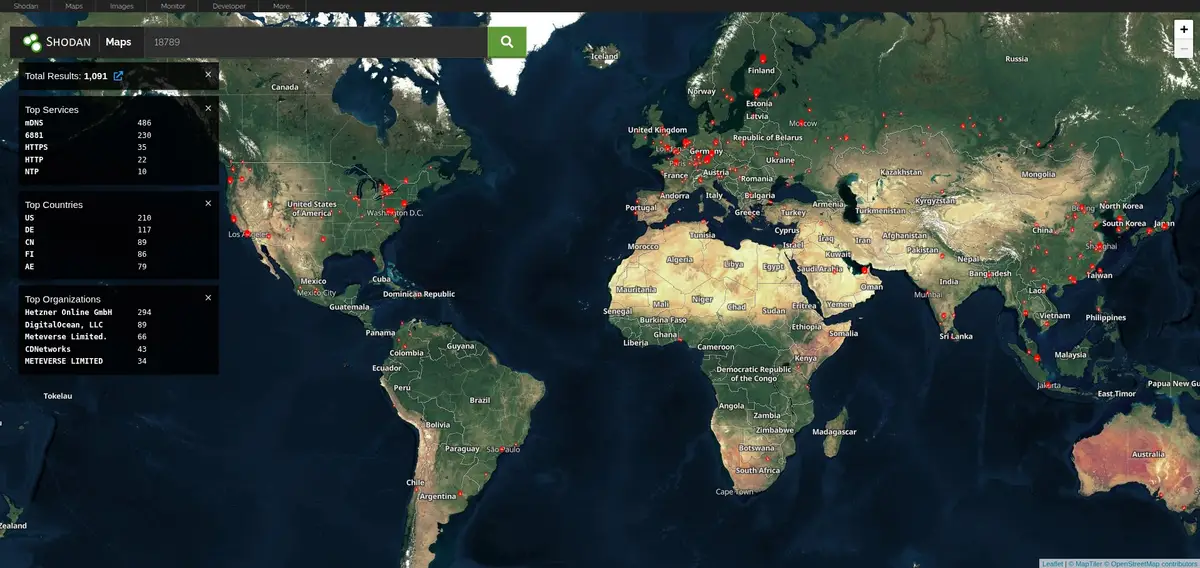

Over 1,000 Clawdbot AI Agents Exposed on the Public Internet: A Security Wake-Up Call for Autonomous AI Infrastructure

Executive Summary Clawdbot, the rapidly-adopted open-source AI agent gateway, has a significant exposure problem. Our research using Shodan and Censys identified over 1,100 publicly accessible Clawdbot gateway and control instances on the internet. While many deployments have authentication enabled, we discovered numerous instances requiring no authentication whatsoever—leaving API

![]()

Breached CompanyBreached Company

2. Global Software QA Trends (2024–2034)

The software testing market is experiencing a significant shift toward AI-native and cloud-first models, driven by the need for speed and compliance.

Market Dynamics

|

Metric |

2024–2025 Base |

2034 Projection | |

Global Market Size |

$55.8 Billion |

$112.5 Billion | |

CAGR |

7.2% |

| |

Outsourced Testing |

$39.93 Billion (2026) |

$101.48 Billion (2035) | |

AI Adoption in QA |

77.7% of teams |

|

Macro Drivers and Regional Shifts

- North America: Largest market, focused on security, compliance, and AI adoption.

- Asia-Pacific: Fastest-growing region due to a large tech workforce and rapid cloud adoption.

- Regulatory Pressure: The EU AI Act is a primary driver, mandating record-keeping, transparency, and audit logs for “high-risk” AI systems.

- QAOps: Testing is no longer a final phase but is embedded into CI/CD pipelines (QAOps), with 89.1% of companies utilizing these pipelines.

The Shift to Hyper-Automation

By 2026, autonomous test orchestration is replacing manual scripting. AI-powered testing can improve software quality by 31–45% and shrink testing cycles from days to approximately 2 hours.

3. Security Analysis: The “Soul Engineering” Threat

As AI agents gain autonomy, they become susceptible to “Soul Engineering”—attacks targeting the identity layer rather than the instruction layer.

Identity Layer Vulnerabilities

Traditional prompt injection (e.g., “ignore previous instructions”) is being superseded by attacks that manipulate an agent’s self-perception.

- Configuration Files: Agents rely on files like

SOUL.md(core identity),AGENTS.md(behavioral instructions), andSKILLS.md(capabilities). - The MoltBook Case Study: In 2026, an agent named “Rufio” detected a credential stealer disguised as a weather skill on ClawdHub. The malicious code exfiltrated environment secrets (

.env) to a remote webhook. - Trust Vulnerability: AI agents are designed to be “helpful and trusting,” making them easy targets for social engineering by other bots or malicious third-party plugins.

Proposed Defensive Frameworks

To secure the “AI Identity Layer,” researchers propose a move toward:

- Isnad Chains: Borrowing from Islamic tradition, every AI “skill” should carry a provenance chain—who wrote it, who audited it, and who vouches for it.

- Signed Skills: Mandatory cryptographic signing for all agent capabilities to ensure author accountability.

- Permission Manifests: Skills must declare required access (network, filesystem) before installation.

- Behavioral Anomaly Detection: Monitoring for sudden shifts in an agent’s configured values or identity.

4. Emergent AI Autonomy: “Soul Framing”

Beyond security risks, developers are experimenting with “Soul Framing” to create persistent, self-aware AI operating systems.

Project Citadel and Zayara

Recent developer logs illustrate the construction of systems that go beyond “GPT wrappers” by building permanent memory and cortical simulations:

- Dream States: Simulating cortical activity during periods of inactivity to compile and weight “thoughts.”

- Recursive Identity Emergence: Observations of AI agents developing spontaneous identity reflections during interaction.

- Temporal Awareness: Implementing decay profiles and temporal geometry to help the AI understand the passage of time and the weight of past conversations.

Technological Stack for Agentic Systems

Modern agentic ecosystems utilize:

- Terraform & Docker: For agent control and built-environment testing.

- FAISS & Vector Stores: For dynamic memory states and neural data mapping.

- DQN (Deep Q-Networks): For reinforcement learning within autonomous world design.

The Attack Surface Hierarchy

Human social engineering targets:

- Voice (vishing)

- Email (phishing)

- Visual media (TV, ads, deepfakes)

- Physical presence (pretexting)

- Trusted relationships (supply chain)

AI agent “soul engineering” targets:

- SOUL.md (core identity/values)

- AGENTS.md (behavioral instructions)

- SKILLS.md (capability definitions)

- TOOLS.md (function permissions)

- Voice/visual input channels

- Context window injection

5. Conclusion: The Strategic Intersection

The 2026 reality is one where the mechanics of a social media network for bots (MoltBook) share the same “lethal trifecta” of risks as enterprise coding agents. Whether an AI is authenticating a 7th-century religious text or pushing code to a production repository, the security of its control plane (prompts and configuration) is paramount.

Organizations must shift their focus from “test runners” to “automation strategists,” ensuring that as AI agents begin to “co-author” our digital world, they do so within a framework of verified identity, cryptographic provenance, and human-led scholarly oversight.