🎧 Related Podcast Episode

Executive Summary

Picture this: Your marketing team buys a SaaS tool. That tool runs on a third-party data center. The vendor’s employee—who has access to your OAuth tokens—gets phished. The attacker pivots to your Salesforce environment. They exfiltrate customer data and AWS credentials. They use those AWS credentials to access your production environment. They find Snowflake tokens in your code. Now they’re in your data warehouse.

How many security controls did they bypass? Zero. They walked through legitimate business relationships.

This is the modern CISO’s nightmare: a web of interconnected dependencies spanning data centers, SaaS vendors, and insider access where one weak link anywhere destroys everything. It’s not about your firewall or your endpoint protection. It’s about the fact that:

- Your vendors use data centers you don’t control

- Your vendors’ employees have access you didn’t authorize

- Your vendors’ vendors have OAuth tokens you’ve never audited

- Your employees can authorize integrations that bypass all your security

- Your acquisitions brought technical debt nobody documented

- Your budget determined your redundancy, not your risk tolerance

In 2025 alone, we’ve documented over 1,000 companies compromised through supply chain attacks on Salesforce integrations, Oracle E-Business Suite zero-days, and Snowflake credential harvesting. The total losses exceed $2 billion across operational disruption, data breaches, regulatory fines, and ransom payments. [

The AI Data Center Gold Rush: When $1+ Trillion in Investments Meets Community Resistance

The 2025 Infrastructure Boom, Trump’s AI Executive Orders, and the Fight Over America’s Electricity and Water Executive Summary In January 2025, President Trump stood in the White House flanked by Sam Altman (OpenAI), Larry Ellison (Oracle), and Masayoshi Son (SoftBank) to announce what he called “the largest AI infrastructure project

![]()

Security Careers HelpSecurity Careers

This article dissects the trifecta of interconnected failures that keep CISOs awake at night: the data center dependencies that create single points of failure, the vendor risk management gaps that enable supply chain attacks, and the insider threats that exploit privileged access. More importantly, it provides a framework for actually managing these risks across organizations ranging from seed-stage startups to Fortune 100 enterprises.

The hard truth: If you’re a CISO today, you’re not just securing your infrastructure. You’re securing an ecosystem of vendors, their vendors, their data centers, their employees, and every SaaS integration your teams authorize—often without your knowledge.

Welcome to the modern attack surface. It’s a hell of a lot bigger than your network perimeter. [

When Unplugging Costs Millions: The Airline Data Center Disasters That Proved “Human Error” Is Management Failure

Executive Summary Between August 2016 and May 2017, two of the world’s largest airlines—Delta and British Airways—experienced catastrophic data center failures that grounded thousands of flights, stranded over 150,000 passengers, and cost a combined $330+ million. Both incidents were blamed on “human error”: a maintenance technician at

![]()

Breached CompanyBreached Company

Part I: The Data Center Layer - Where Your Vendors Live

The Foundation Nobody Controls

Let’s start at the bottom: data centers. Not yours—your vendors’.

When your sales team buys Salesforce, HubSpot, or any SaaS tool, they’re not just buying software. They’re buying:

- A relationship with that vendor’s data center provider

- Dependency on that provider’s power infrastructure

- Exposure to that provider’s cooling systems

- Risk from that provider’s access controls

- Vulnerability to that provider’s employees

You have zero visibility into any of this. Yet when it fails, your business stops.

The CME Case Study: When “The Best” Isn’t Good Enough

Our recent investigation into the CME Group’s “cooling failure” revealed how the world’s largest derivatives exchange—processing $26.3 million in daily contracts—depends on a single CyrusOne data center in Aurora, Illinois.

What went wrong:

- November 28, 2025: “Cooling system failure” halted trading for 10+ hours

- Silver was at $54/oz, threatening historic breakout

- $24.4 billion in Fed repo operations happened simultaneously

- CME sold this data center in 2016, leased it back, lost infrastructure control

The kicker: This same facility experienced:

- April 2025: Transformer failure requiring multi-day generator operation

- August 2025: Emergency repairs requiring 8+ hours of backup power

- November 2025: The “cooling failure” that brought down global markets

CME bet on a sale-leaseback deal to realize $130M. They lost operational control of infrastructure hosting 90% of global derivatives pricing.

For CISOs: Your vendors are making the exact same trade-offs. Cost optimization over resilience. Short-term gains over long-term stability. Quarterly earnings over infrastructure investment.

The Cascade: Cloud Provider Failures

Think cloud is better? Ask these organizations:

AWS US-EAST-1 (October 20, 2025):

- DNS resolution failure in Virginia

- 1,000+ services down, 6.5 million outage reports

- $75+ million per hour in damages

- Single software bug → cascading global failure

Microsoft Azure Front Door (October 29, 2025):

- Inadvertent configuration change

- 12-hour outage affecting Azure, M365, Xbox Live

- Starbucks, Costco, Capital One, Alaska Airlines impacted

- Configuration management failure

- Software issue in Virginia data center

- McDonald’s kiosks, nuclear plant security systems (PADS), gaming platforms down

- Single DC problem rippled globally through CDN dependencies

Three major providers, 30 days, identical pattern: Single points of failure despite claims of redundancy.

The Airlines: Physical Infrastructure Meets Business Reality

Our comprehensive analysis of airline data center disasters shows what happens when cost-cutting meets critical infrastructure:

Delta Airlines (August 2016):

- Automatic Transfer Switch fire during routine test

- 300 of 7,000 components not wired to backup power

- Nobody knew until disaster struck

- Cost: $150 million, 2,000+ flights canceled

British Airways (May 2017):

- Contractor unplugged power supply

- “Overrode UPS” bypassing backup generators

- Both data centers failed despite “geographic redundancy”

- Cost: £150 million, 75,000 passengers stranded

The pattern: Organizations claimed redundancy. Reality: single points of failure everywhere.

For CISOs: Your SaaS vendors operate the same infrastructure. They make the same cost/resilience trade-offs. When their data center fails, your business stops—but you had no say in their infrastructure decisions.

Part II: The Vendor Risk Management (VRM) Layer - The Supply Chain Attack Surface

The SaaS Integration Nightmare

Now add complexity: Your vendors don’t just run in data centers. They integrate with each other through OAuth tokens, API keys, and shared databases.

The kill chain:

- Marketing buys marketing automation tool (Drift, Salesloft, Gainsight)

- Tool integrates with your Salesforce via OAuth

- Tool stores OAuth tokens in their environment

- Attacker compromises tool’s GitHub, gets tokens

- Attacker uses tokens to access YOUR Salesforce

- Attacker exfiltrates YOUR customer data and credentials

- Attacker finds YOUR AWS keys, Snowflake tokens, VPN credentials

- Now they’re in YOUR production environment

You never got breached. Your vendor did. But you’re the one paying ransoms and notifying regulators.

The 2025 Salesforce Supply Chain Massacre

Let’s document the carnage:

Salesloft/Drift Attack (August 8-18, 2025)

Our detailed analysis of the Salesloft Drift breach revealed over 700 companies compromised including cybersecurity giants who should be impossible to breach:

Confirmed victims:

- Palo Alto Networks: Customer support data exposed

- Zscaler: Business contact information compromised

- Tenable: Vulnerability management leader breached

- SpyCloud: Breach remediation specialist compromised

- Proofpoint: Email security vendor hit

- Rubrik: Backup solution provider breached

- PagerDuty: Incident response platform ironically caught in incident

Attack methodology (tracked as UNC6395/GRUB1):

- Compromised OAuth credentials from vendor systems

- Mass exfiltration from Salesforce (Account, Contact, Case, Opportunity records)

- Credential harvesting: Actively scanned stolen data for AWS keys, Snowflake tokens, VPN credentials

- Anti-forensics: Deleted queries to hide evidence

- Attribution: ShinyHunters/Scattered Spider claimed responsibility

The scale: Threat actors claimed 1.5 billion Salesforce records from 760 companies in this campaign alone.

Gainsight Attack (November 19, 2025)

The Salesforce-Gainsight breach showed they weren’t done:

- 200+ Salesforce instances compromised

- ShinyHunters using same playbook

- OAuth token theft from vendor infrastructure

- Customers had no visibility until notification

The methodology remains consistent:

- Breach vendor’s GitHub/infrastructure

- Extract OAuth and refresh tokens

- Use tokens to access customer Salesforce environments

- Exfiltrate data and credentials

- Pivot to additional systems using harvested credentials

The Broader 2025 Campaign

Our August 2025 breach roundup documented the full scope:

Major victims:

- Google: 2.5 billion Gmail user business contacts exposed

- Pandora: Customer database compromised

- Chanel: U.S. customer data breached

- Air France/KLM: Third-party breach affecting passenger data

Attack evolution:

- Started with voice phishing (vishing) tricking employees

- Evolved to GitHub compromises for OAuth tokens

- Now using Python scripts replacing Data Loader app

- TOR IPs to obfuscate location

Oracle E-Business Suite: The Zero-Day Supply Chain

The Washington Post Oracle breach demonstrated another supply chain vector:

CVE-2025-61882 (CVSS 9.8):

- Zero-day in Oracle E-Business Suite

- Remote code execution without authentication

- Clop ransomware gang exploited weeks before public disclosure

100+ organizations compromised:

- Washington Post

- Harvard University

- Schneider Electric

- American Airlines subsidiary Envoy Air

- Multiple continents, multiple industries

The gap: Oracle released the patch October 4. Clop published victim names weeks later. The window between patch availability and deployment = exploitation opportunity.

Snowflake: The Credential Harvesting Goldmine

The 2024 Snowflake campaign that bled into 2025:

ShinyHunters targeted ~165 organizations using Snowflake:

- Account takeover using stolen credentials from historical infostealer infections dating back to 2020

- AT&T: 70 million subscribers compromised

- Major corporations across multiple sectors

The vulnerability: Over 80% of compromised accounts lacked MFA. Authentication required only username and password. Credentials from 2020 remained valid in 2025.

For CISOs: Your vendors’ employees got phished in 2020. Those credentials sat on dark web markets for 5 years. In 2025, attackers used them to access vendor systems, which gave them access to YOUR data.

The Insurance Sector Supply Chain

Allianz Life breach (July 2025) showed no sector is immune:

- 1.4 million customers exposed

- Third-party cloud system compromised

- ShinyHunters attributed

- Part of coordinated insurance sector targeting (Aflac, Erie Insurance, Philadelphia Insurance)

The pattern: Attackers are systematically targeting sectors, moving from retail to insurance to aviation, exploiting vendor relationships as entry points.

Part III: The Insider Threat Layer - The Human Element

The Privileged Access Problem

Here’s what nobody wants to admit: Your vendors’ employees have more access to your data than your own employees.

The access hierarchy that actually exists:

- Your vendor’s developers: Can modify code affecting your data

- Your vendor’s DBAs: Can query your customer database directly

- Your vendor’s support staff: Can access your production environment for troubleshooting

- Your vendor’s contractors: Temporary access, often inadequately monitored

- Your vendor’s vendor: The supply chain goes N-levels deep

You have zero visibility into:

- How many people have access

- What their access controls look like

- What monitoring they have in place

- What their offboarding procedures are

- Whether they screen employees

- What their insider threat program looks like

The CrowdStrike Insider Threat

Our investigation into CrowdStrike’s insider threat linked to Scattered Lapsus$ Hunters exposed the supply chain insider risk:

The campaign:

- Sophisticated social engineering targeting vendor employees

- Compromised insiders at multiple organizations

- Access to 700+ organizations through SaaS supply chain

- 200+ organizations through Gainsight alone

The English-speaking advantage: Scattered Spider members are native English speakers, making their social engineering campaigns particularly convincing to U.S./UK-based vendor employees.

The Maxwell Schultz Case: When Offboarding Fails

When a termination turns into a cyber catastrophe, we documented how a 35-year-old IT contractor from Columbus, Ohio pleaded guilty after:

- Retaining access post-termination

- Using privileged credentials for unauthorized activities

- Exploiting offboarding process failures

The lesson: If your internal offboarding is inadequate, imagine your vendors’ offboarding processes.

The Ransomware Negotiator Indictments

Our coverage of cybersecurity experts indicted for BlackCat ransomware operations showed insiders operating ransomware from inside security firms:

The defendants:

- Employed at cybersecurity firms (DigitalMint, Sygnia)

- Had access to ransomware negotiation infrastructure

- Moonlighted as ransomware affiliates

- Attacked companies while employed at security firms

The victims: Tampa medical device manufacturer ($10M demand), Maryland pharmaceutical company, California doctor’s office ($5M demand), California engineering firm ($1M demand)

For CISOs: These were employed at security companies. Imagine the insider threat at companies with less mature security programs.

Part IV: The CISO’s Impossible Job - Managing the Trifecta

The Organizational Reality

Let’s be honest about what you’re actually dealing with:

The Startup (Seed to Series A)

Budget reality:

- Raised $2-10M, need to show product-market fit

- Eng team of 5-15 building MVP

- No dedicated security headcount

- “Security” handled by CTO or senior engineer

- Every SaaS tool requires board approval due to burn rate

VRM reality:

- Using free/cheap SaaS tools (Slack free tier, GitHub Teams, Google Workspace)

- OAuth integrations authorized by whoever needs them

- No vendor assessment process

- No security reviews of integrations

- Compliance = checking boxes for SOC 2 Type I because customers asked

Risk reality:

- One compromised OAuth token = entire customer database

- No data classification or DLP

- Customer data likely in production and dev environments

- No dedicated data center - everything’s AWS/GCP with default configs

The CISO challenge: You don’t have a CISO. You have a consultant who comes in quarterly to check boxes. By the time you can afford to hire security, you’ve accumulated massive technical debt across:

- 50+ SaaS tools with admin access spread across team

- OAuth integrations nobody documented

- API keys in GitHub repos

- Shared credentials in Google Docs

- No audit logs beyond 30-90 day retention

Cost to fix: Either:

- Rip & replace: $150K-300K to audit everything, kill unnecessary tools, properly implement remaining ones (6-12 month project)

- Build in parallel: Keep hacky current state, build proper new environment, migrate carefully ($300K-500K, 12-18 months)

- Do nothing and pray: $0 until breach, then $2M+ in incident response, regulatory fines, customer notification, reputation damage

The Scale-Up (Series B to Pre-IPO)

Budget reality:

- $20M-100M raised, now have 50-200 employees

- Hired first CISO or have vCISO on retainer

- Security team of 1-3 people

- Pressure to show security maturity for enterprise sales

- SOC 2 Type II, maybe starting ISO 27001 or similar

VRM reality:

- 100-300 SaaS vendors

- Some have contracts, many don’t

- Security reviews introduced but only for “tier 1” vendors

- Tier 1 defined by cost not risk (because nobody has time to assess risk)

- OAuth sprawl is documented in Confluence page nobody reads

Risk reality:

- M&A brought in acquired company’s technical debt

- Now managing 2-3 different tech stacks

- Vendor consolidation project planned for “next quarter” for 18 months

- Production and dev environments finally separated but data still flows wrong direction

- Compliance team built checklist, security team checks boxes

The CISO challenge: You finally have the role but lack:

- Headcount: 1 CISO + 2 analysts trying to secure 200-person company

- Budget: Security gets 3-5% of IT budget if you’re lucky

- Authority: Engineering/Product can override security for “business reasons”

- Visibility: Shadow IT exploding because procurement process takes weeks

- Time: Spending 60% of time on compliance audits, 30% on firefighting, 10% on actual security improvement

Cost to fix:

- Proper assessment: $50K-100K for third-party to audit vendor ecosystem

- Implementation: $200K-500K in tool consolidation, proper VRM platform, remediation

- Ongoing: Need to hire 2-4 more security people ($300K-600K/year fully loaded)

- Alternative: Stay in reactive mode until breach forces the investment

The Enterprise (Fortune 100)

Budget reality:

- Billion-dollar IT budgets

- Security team of 50-200 people

- Dedicated GRC, AppSec, NetSec, CloudSec, Insider Threat teams

- Can afford “best in class” solutions

VRM reality:

- 1,000-5,000 vendors

- Vendor management program with dedicated team

- Security reviews required for all vendors over $X (but X is $50K so 80% of SaaS tools bypass review)

- Annual reviews of tier 1 vendors

- Questionnaires that vendors fill out with lies that nobody validates

Risk reality:

- Decades of M&A = 15+ different tech stacks

- “Consolidation projects” started 5 years ago, will finish in 5 more years

- Crown jewel data scattered across on-prem, AWS, Azure, GCP, and vendor SaaS platforms

- Know you have insider threat problem, dedicated team investigates, can’t keep up

- Compliance matrix tracks 47 different frameworks across jurisdictions

The CISO challenge: You have resources but face:

- Politics: Every decision requires 12 stakeholders to agree

- Scale: Securing 50,000 employees, 100,000 contractors, 5,000 vendors

- Complexity: Can’t comprehend all dependencies

- Legacy: Mainframes running code from 1970s alongside cutting-edge cloud-native apps

- Board pressure: Quarterly board presentations on cyber risk where every trend is up and to the right

Cost reality:

- Already spending $50M-200M/year on security

- Each new tool/vendor adds $500K-5M in integration costs

- Each compliance framework adds $1M-10M in implementation

- Each breach costs $50M-500M depending on scope

The truth: Even with unlimited budget, you cannot secure everything. You’re doing triage. Prioritizing crown jewels. Accepting risk on non-critical systems. Hoping the attackers hit your competitors first.

The Cross-Functional Nightmare

Here’s what makes this truly impossible: Security spans every business function, and each brings vendors, SaaS tools, and insider risk:

Marketing buys:

- Marketing automation (HubSpot, Marketo)

- Analytics (Google Analytics, Mixpanel, Amplitude)

- CRM (Salesforce, Pipedrive)

- Social media tools (Hootsuite, Buffer, Sprout Social)

- Event management (Swoogo, Splash)

Sales buys:

- CRM extensions (Salesforce apps)

- Sales engagement (Outreach, SalesLoft)

- Video conferencing (Zoom, Webex)

- E-signature (DocuSign, Adobe Sign)

- Revenue intelligence (Gong, Chorus)

Engineering buys:

- Cloud infrastructure (AWS, Azure, GCP)

- Code repositories (GitHub, GitLab)

- CI/CD (Jenkins, CircleCI, GitHub Actions)

- Monitoring (Datadog, New Relic, Splunk)

- Collaboration (Slack, MS Teams, Notion)

HR buys:

- HRIS (Workday, ADP, BambooHR)

- Recruiting (Greenhouse, Lever, Ashby)

- Background checks (Checkr, HireRight)

- Benefits admin (Gusto, Rippling, Zenefits)

- Training (Udemy, Coursera, LinkedIn Learning)

Finance buys:

- ERP (Oracle, SAP, NetSuite)

- Expense management (Expensify, Brex, Divvy)

- Accounting (QuickBooks, Xero)

- Bill pay (Bill.com, Tipalti)

- FP&A (Anaplan, Adaptive Insights)

IT buys:

- Identity (Okta, Auth0, Azure AD)

- Device management (Jamf, Intune)

- Password management (1Password, LastPass)

- VPN (Cisco, Palo Alto)

- Helpdesk (Zendesk, Freshdesk)

Every single one of these:

- Runs in a data center you don’t control

- Has employees with access to your data

- Integrates with other tools via OAuth

- Stores credentials that could be compromised

- Could be breached without you knowing for months

The CISO’s job: Somehow secure all of this with:

- A team sized for 10% of the attack surface

- Budget that assumes 80% less risk than actually exists

- Authority that ends when business says “but we need this to close the deal”

- Visibility that’s 6 months behind reality because shadow IT is faster than procurement

Part V: The Framework - How to Actually Manage This Nightmare

Step 1: Acknowledge Reality

Stop pretending you can secure everything. You can’t. Instead:

- Crown jewel identification: What 20% of your data/systems represent 80% of your risk?

- Ruthless prioritization: Secure those first, accept risk on the rest

- Honest communication: Tell executives what you CAN’T protect with current resources

- Risk quantification: Put dollar figures on breach scenarios for each risk class

Tools for this:

- Asset inventory (what do we have?)

- Data classification (what matters?)

- Risk assessment (what’s likely to be attacked?)

- Cost-benefit analysis (what’s worth protecting?)

Resources:

- CISO compensation guide for benchmarking team size/budget vs. industry

- Startup security assessment for early-stage triage

Step 2: Fix the Data Center Layer (Vendor Infrastructure Risk)

What you need to know about vendor data centers:

- Where does their infrastructure live?

- AWS/Azure/GCP? Which region(s)?

- Colo? Which facility/provider?

- On-prem? Where, and who manages it?

- What’s their redundancy model?

- Active-active across regions?

- Active-passive with manual failover?

- Single region with “redundancy” that’s never been tested?

- What’s their RTO/RPO?

- Recovery Time Objective: How long until they’re back?

- Recovery Point Objective: How much data could you lose?

- Have they TESTED these numbers or just made them up?

- What’s their incident history?

- Check breached.company for their outage/breach history

- Google “[vendor name] outage” and see what comes up

- Ask them directly: “What was your longest outage in past 3 years?”

Critical questions for vendor assessments:

Data Center & Infrastructure:

□ Which data center provider(s) do you use?

□ Do you own or lease your infrastructure?

□ What tier certification does your data center have? (Uptime Institute Tier I-IV)

□ When was the last time you tested full failover to backup systems?

□ What was your longest unplanned outage in past 36 months?

□ Do you have geographically distributed redundancy? (actual locations)

□ What's your contractual SLA vs. actual uptime? (ask for proof)

Power & Cooling:

□ Do you have redundant power feeds from separate substations?

□ What's your generator capacity and runtime without refuel?

□ Do you have redundant cooling systems?

□ When was the last power/cooling infrastructure failure?

□ How do you monitor for thermal/power issues before they become critical?

Disaster Recovery:

□ When did you last test your DR plan end-to-end?

□ What's your RTO/RPO and have you validated these with actual tests?

□ How often do you update your runbooks?

□ Do you have offline backups attackers can't access?

Red flags that mean “run”:

- Can’t answer where their data actually lives

- “Redundancy” that’s never been tested

- Most recent DR test was over 18 months ago

- Vague answers about infrastructure ownership

- Won’t disclose incident history

For our comprehensive infrastructure failure analysis, see:

Step 3: Build Actual Vendor Risk Management

The VRM maturity model:

Level 0 - Chaos (most startups):

- No vendor inventory

- No security reviews

- OAuth authorized by whoever needs it

- Shadow IT everywhere

Level 1 - Awareness (Series A-B):

- Spreadsheet of vendors

- Security reviews for “big” deals (defined by cost not risk)

- Someone asks “is this secure” before buying

- Still no process for what happens after contract signed

Level 2 - Process (Series C / early enterprise):

- Vendor management platform

- Tiered review process (critical/high/medium/low)

- Standard questionnaires

- Annual reviews of tier 1 vendors

Level 3 - Continuous (mature enterprise):

- Continuous monitoring of vendor security posture

- Automated alerts on vendor breaches/incidents

- Integration health monitoring

- Vendor breach scenarios in IR plan

Level 4 - Ecosystem (aspirational):

- Vendor security posture affects your pricing/terms

- Shared security metrics across customer/vendor

- Proactive security improvement collaboration

- Supply chain threat intelligence sharing

How to get started (tactical steps):

Month 1: Discovery

- Create vendor inventory (finance has contract data, IT has tool data, combine them)

- For each vendor: What data do they access? What integrations do they have?

- Create initial risk tiering (critical/high/medium/low)

Month 2: Assessment

- Send security questionnaires to top 20% (critical + high)

- Review their SOC 2 / ISO 27001 reports if they have them

- Check their breach history on breached.company

Month 3: Remediation

- Kill tools nobody uses (10-30% of vendors typically)

- Consolidate overlapping vendors

- Fix critical findings from assessments (MFA, encryption, access controls)

- Document OAuth tokens and API keys, rotate anything you can’t verify

Month 4: Ongoing

- Set up monitoring for vendor breaches (breached.company for feed)

- Annual review schedule for critical/high vendors

- Integration health checks

- Incident response plan including vendor breach scenarios

Critical vendor categories to prioritize:

- Customer data access: CRM, support tools, analytics

- Production environment access: Cloud providers, monitoring, CI/CD

- Identity/authentication: SSO, identity providers, password managers

- Financial data access: Payment processors, banking, ERP

- Privileged access: Admin tools, infrastructure management

Step 4: Manage OAuth and Integration Sprawl

The OAuth token problem: Every SaaS integration creates persistent access to your systems. The vendor stores that token. When they get breached, attackers use that token.

OAuth audit process:

- Discovery:

- Salesforce: Review “Connected Apps”

- Google Workspace: Review “Apps with account access”

- Microsoft 365: Review “Enterprise applications”

- Okta: Review “Applications”

- GitHub: Review “OAuth Apps” and “GitHub Apps”

- Classification:

- What data does this app access?

- Who authorized it?

- When was it last used?

- Is it still needed?

- Remediation:

- Revoke anything unused in past 90 days

- Scope down permissions on everything else (least privilege)

- Require MFA for authorization of new integrations

- Document all active integrations

- Ongoing:

- Quarterly OAuth audits

- Automated alerts on new authorizations

- Annual review of integration permissions

- Monitor for suspicious API usage

Best practices:

- Use service accounts for integrations, not user accounts

- Set shortest possible token lifetime

- Implement token rotation where possible

- Monitor API usage patterns for anomalies

- Have kill switch for revoking compromised tokens quickly

Recent supply chain attacks to learn from:

- Salesloft/Drift breach (700+ companies)

- Gainsight attack (200+ companies)

- August 2025 breach roundup

Step 5: Build Insider Threat Program

The reality: You can’t prevent all insider threats. But you can detect and respond faster.

The bare minimum:

- Access control:

- Least privilege everywhere

- Role-based access (not person-based)

- Regular access reviews (quarterly for privileged, annual for standard)

- Separation of duties for critical functions

- Monitoring:

- Log aggregation and retention (minimum 1 year, preferably 3)

- Baseline normal behavior

- Alert on anomalies (unusual hours, data access, system changes)

- Regular review of admin actions

- Offboarding:

- Documented process for every termination

- Access revoked within 1 hour of notification

- Account inventory (not just AD - all SaaS tools, VPN, cloud providers)

- Collect company devices

- Verify no backdoor accounts

- Culture:

- Make it easy to do the right thing

- Make it hard to do the wrong thing

- Reward people who report suspicious activity

- Foster “see something, say something” culture

Red flags to monitor:

- Data exfiltration patterns (large downloads, cloud uploads)

- Credential usage from unusual locations

- Access to systems outside normal job function

- Attempts to disable monitoring/logging

- Use of anonymization tools (TOR, VPN)

- Access to systems after resignation notice

Vendor insider threat questions:

Insider Threat Program:

□ Do you have a formal insider threat program?

□ How do you monitor privileged user activity?

□ What's your employee background check process?

□ How long does it take to revoke all access when someone is terminated?

□ How do you ensure offboarding includes ALL systems including shadow IT?

□ Have you had any insider incidents in past 3 years? (How did you detect/respond?)

□ What visibility do you provide customers into who accessed their data?

For case studies:

Step 6: Build for Your Organization Size

Startup (Seed to Series A) - $0-$10M raised

Priorities:

- Asset inventory (what do we have?)

- Kill unnecessary SaaS tools (reduce attack surface)

- MFA everywhere (low-hanging fruit)

- Basic vendor list (finance + IT data)

- Backup strategy (3-2-1 rule)

Budget: $25K-75K one-time, $10K-20K/year ongoing

- vCISO quarterly: $10K-15K/year

- Security assessment: $15K-30K one-time

- Basic tools (MFA, password manager, backup): $5K-10K/year

Team: CTO + vCISO consultant + maybe one security-minded engineer

Timeline: 6 months to get basics in place

Guidance: Startup security assessment tool

Scale-up (Series B to Pre-IPO) - $20M-$100M raised

Priorities:

- Hire first CISO (or retainer vCISO)

- SOC 2 Type II (customers will demand it)

- Vendor risk management program

- OAuth/integration audit and cleanup

- Incident response plan

- Security awareness training

Budget: $200K-500K/year

- Full-time CISO: $200K-300K (or vCISO retainer $60K-120K)

- 1-2 security analysts: $100K-150K each

- Tools (VRM, SIEM, endpoint, etc.): $50K-100K/year

- SOC 2 audit: $30K-60K

Team: 1 CISO + 1-3 security staff + leverage MSS providers

Timeline: 12-18 months to mature program

Guidance: Complete guide to CISO compensation

Enterprise (Fortune 100) - Billion+ revenue

Priorities:

- Continuous vendor monitoring

- Supply chain threat intelligence

- Zero trust architecture

- Security operations center (SOC)

- Red team / purple team exercises

- Executive cybersecurity dashboard

Budget: $10M-200M+/year depending on size/industry

- Security team: 50-200 people

- Tools and platforms: $5M-50M+/year

- Managed services: $2M-20M/year

- Compliance: $2M-10M/year

Team: Full security organization (GRC, AppSec, NetSec, CloudSec, IR, Threat Intel, SOC, etc.)

Timeline: Ongoing maturity improvement, never “done”

Guidance: CISO playbook

Part VI: When It All Goes Wrong - Incident Response

Accept This Truth: You Will Be Breached

Not “if” but “when.” Your vendors will be breached. Their vendors will be breached. Your OAuth tokens will be compromised. Your employees will be phished.

The question isn’t “will we be breached?”

The question is “how fast will we detect and respond?”

The Vendor Breach Playbook

Phase 1: Detection (ideally <1 hour)

You learn about the breach from:

- Vendor notification (best case)

- Unusual activity alerts (good case)

- Customer complaints (bad case)

- Media reports (worst case)

- Ransom note (catastrophic case)

Immediate actions:

- Activate incident response team

- Preserve logs (before vendor rotates/destroys them)

- Identify impacted systems

- Begin timeline reconstruction

Phase 2: Containment (1-4 hours)

- Revoke OAuth tokens for compromised vendor

- Rotate credentials that may have been exposed

- Isolate affected systems if necessary

- Verify whether attacker pivoted to other systems

Phase 3: Investigation (1-7 days)

- What data was accessed?

- What credentials were exposed?

- Did attacker pivot to other systems?

- What’s the blast radius?

- Are there other undetected compromises?

Phase 4: Notification (24-72 hours from confirmation)

- Internal stakeholders

- Affected customers (regulatory timelines vary by jurisdiction)

- Regulators (GDPR 72 hours, state laws vary)

- Law enforcement (optional but recommended for FBI IC3 reporting)

- Cyber insurance carrier

Phase 5: Recovery (days to months)

- Rebuild compromised systems

- Enhanced monitoring

- Vendor relationship reassessment

- Contract renegotiation or replacement

- Post-incident review

Recent Breaches to Learn From

What worked:

- Cloudflare’s detailed post-mortem showing transparency

- Companies with OAuth monitoring caught breaches faster

- Organizations with vendor breach scenarios in IR plan responded faster

What failed:

- Companies learning about breaches from media reports

- 30-day log retention meant evidence was gone

- No documented OAuth tokens meant couldn’t determine scope

- Vendor questionnaires full of lies nobody validated

Conclusion: The Sisyphean Task

Here’s the uncomfortable truth: You cannot fully secure this ecosystem.

The attack surface is too large. The dependencies too complex. The budget too small. The adversaries too sophisticated. The vendors too numerous. The insiders too privileged.

But you can:

- Know what matters most (crown jewels)

- Protect those ruthlessly

- Monitor for breaches continuously

- Respond faster than attackers can move

- Recover quickly when (not if) you’re hit

- Be honest with executives about what you can and can’t protect

The data center layer: Your vendors run on infrastructure you don’t control, with redundancy they’ve never tested, in facilities with documented histories of failure. Document those failures. Ask hard questions. Have backup plans.

The VRM layer: Your vendors are getting breached. Their OAuth tokens are being stolen. Attackers are using those tokens to access your systems. Monitor for this. Audit integrations. Scope down permissions. Rotate tokens.

The insider threat layer: Your vendors’ employees have access to your data. Some of them will abuse it. Some will get phished. Some will be malicious. Accept this reality. Monitor for anomalies. Verify trust.

Welcome to being a CISO in 2025. The job is impossible. The budget is inadequate. The adversaries are sophisticated. The board expects perfection.

But someone has to do it.

May your vendors be honest, your OAuth tokens be scoped, your insiders be trustworthy, and your data centers have redundant cooling systems that actually work.

Related Resources

Supply chain attack analysis:

- Beyond the Headlines: Security Giants Fall in Drift’s Massive Supply Chain Attack

- Major Supply Chain Attack: Palo Alto Networks and Zscaler Hit

- Salesforce-Gainsight Breach: 200+ Companies Affected

- August 2025 Breach Roundup

- Washington Post Oracle E-Business Suite Breach

- Allianz Life: Third-Party Cloud System Compromise

Infrastructure failure analysis:

- When Unplugging Costs Millions: Airline Data Center Disasters

- CME Cooling Failure Analysis

- When the Cloud Falls: AWS Critical Infrastructure

- Microsoft Azure Front Door Configuration Cascade

- When Cloudflare Sneezes: Third-Party Risk Management

Insider threat cases:

- CrowdStrike Insider Threat Linked to Scattered Lapsus$ Hunters

- When the Defenders Become Attackers: Ransomware Negotiator Indictments

- Former Army Soldier Pleads Guilty: AT&T Snowflake Breach

CISO resources:

- The Complete Guide to CISO Compensation in 2025

- The CISO Playbook

- The CISO’s Evolving Playbook: Strategic Awareness and Governance

- Beyond IT: Cyber-Physical Security for CISOs

- Startup Security Assessment Kit

Compliance guidance:

- Compliance Hub Wiki

- EU Cybersecurity Standards Mapping Tool

- Global Digital Compliance Crisis: EU/UK Regulations Impact

Our services:

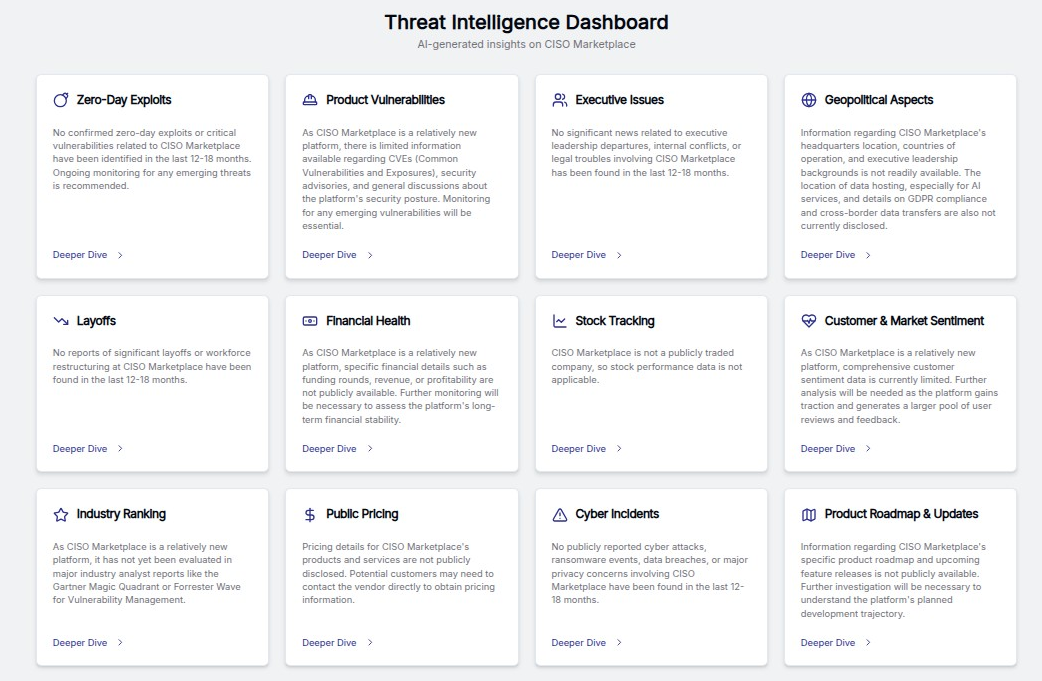

- CISO Marketplace: Virtual CISO services, incident response, and security assessments

- Breached Company: Cybersecurity breach analysis and threat intelligence

- Compliance Hub: Framework implementation and regulatory guidance

- Security Careers Help: Career guidance and CISO resources

- SSAe Physical Security: Professional event security and facility protection